I’ve been playing a bit with all of the cool AI-powered art generation tools that have been unleashed in recent times. I mentioned the other day that I got an invite to DALL-E. Rather than burn through my free credits trying stuff, I’ve been trying random things with Craiyon, a free site that uses DALL-E Mini.

Unrelatedly, I’ve been watching a bunch of horror movies that Netflix has been recommending to me. At some point I veered off into Asian horror films, and there seems to be plenty of them for it to keep recommending to me. I seem to have hit a local maximum in its “you might like this” algorithm, such that nearly everything it recommends to me these days is an Asian horror film.

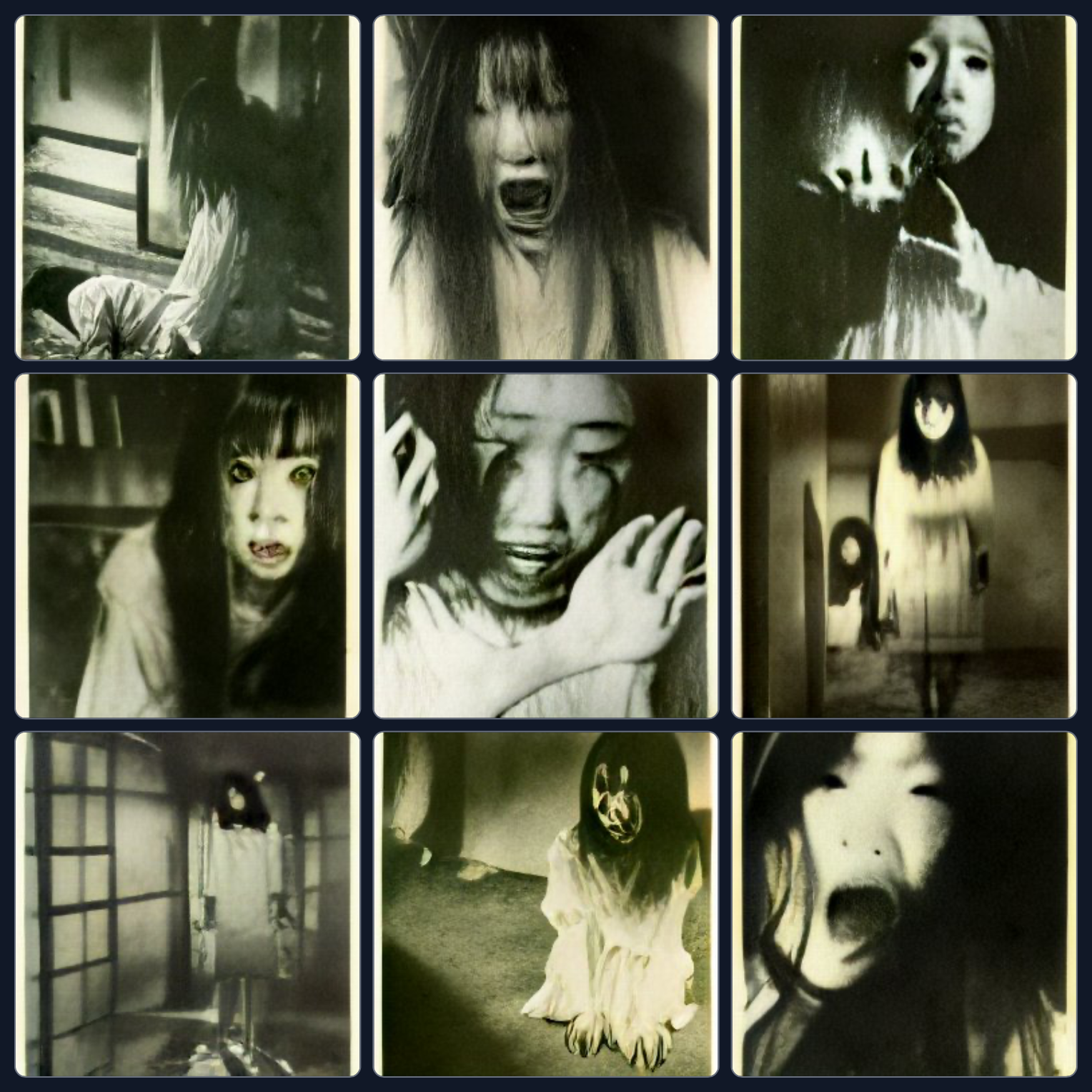

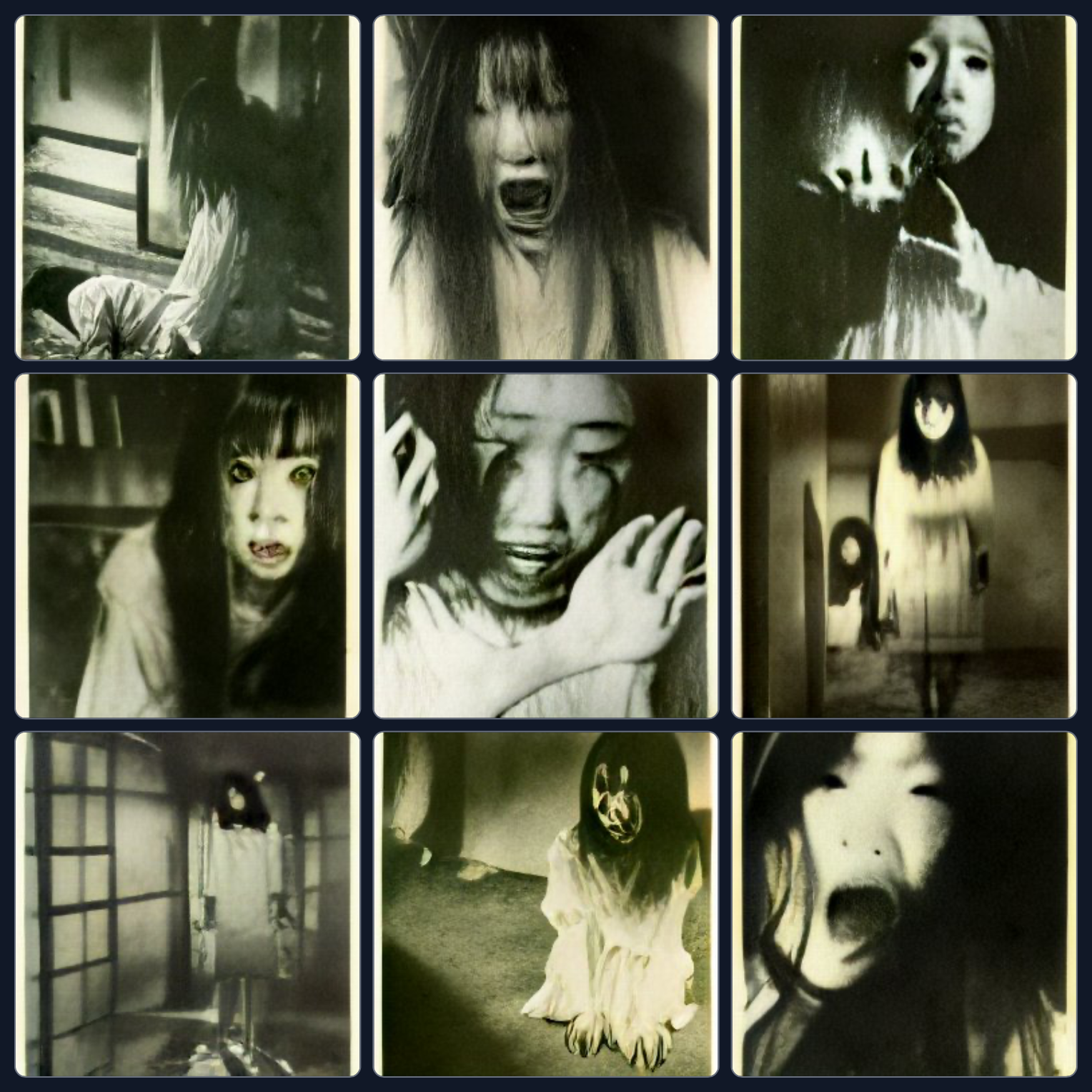

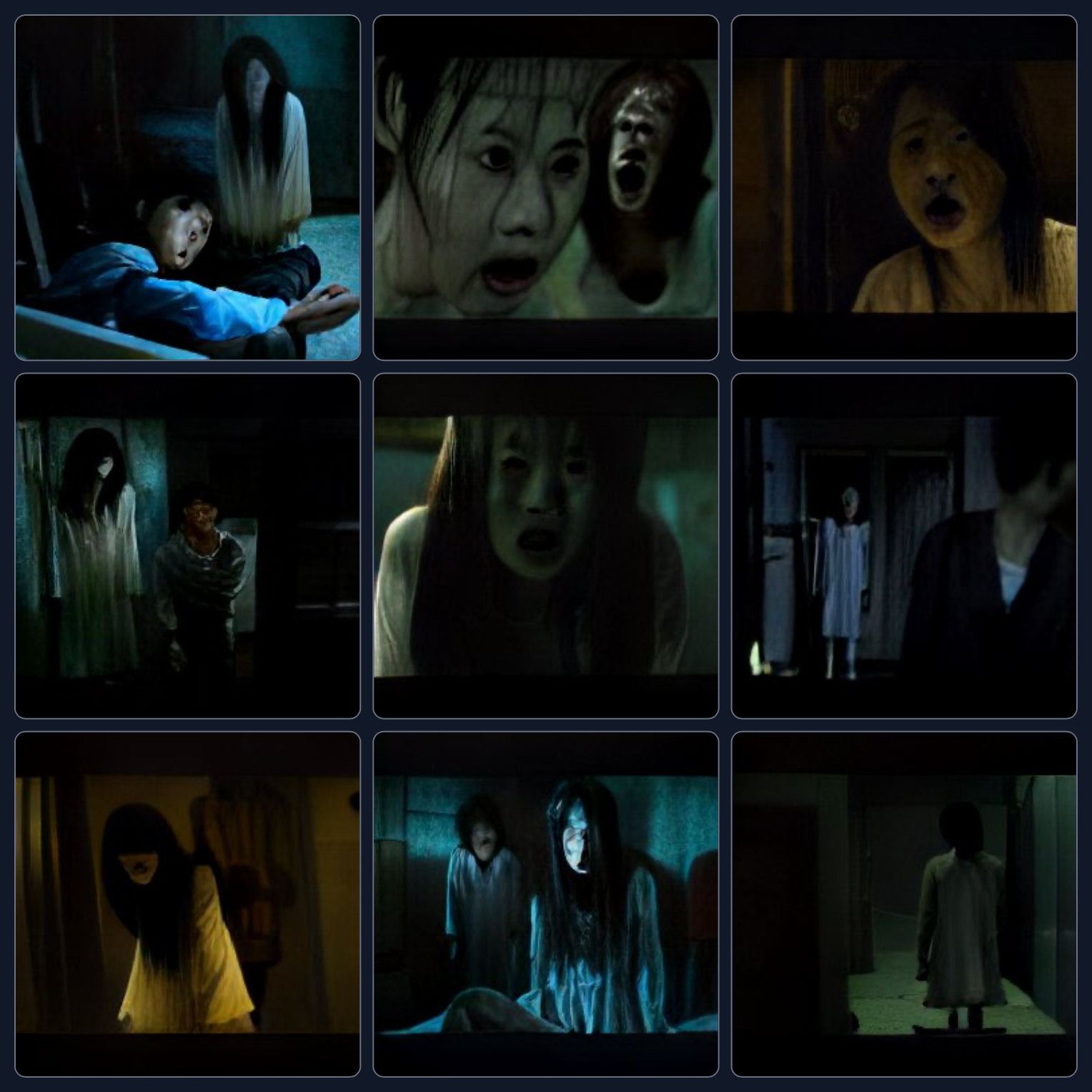

Making the connection between horror films and AI, I decided to try hitting Craiyon with the prompt: Scene from a Japanese horror film. Here’s what it came back with:

Yep. That’s pretty close to what I expected. A creepy long-haired ghost girl, trading on the Yotsuya Kaidan story and the indelible influence it has had on Japanese horror, via The Ring. Notice also the typically Japanese shoji walls, most noticeable in the bottom left frame.

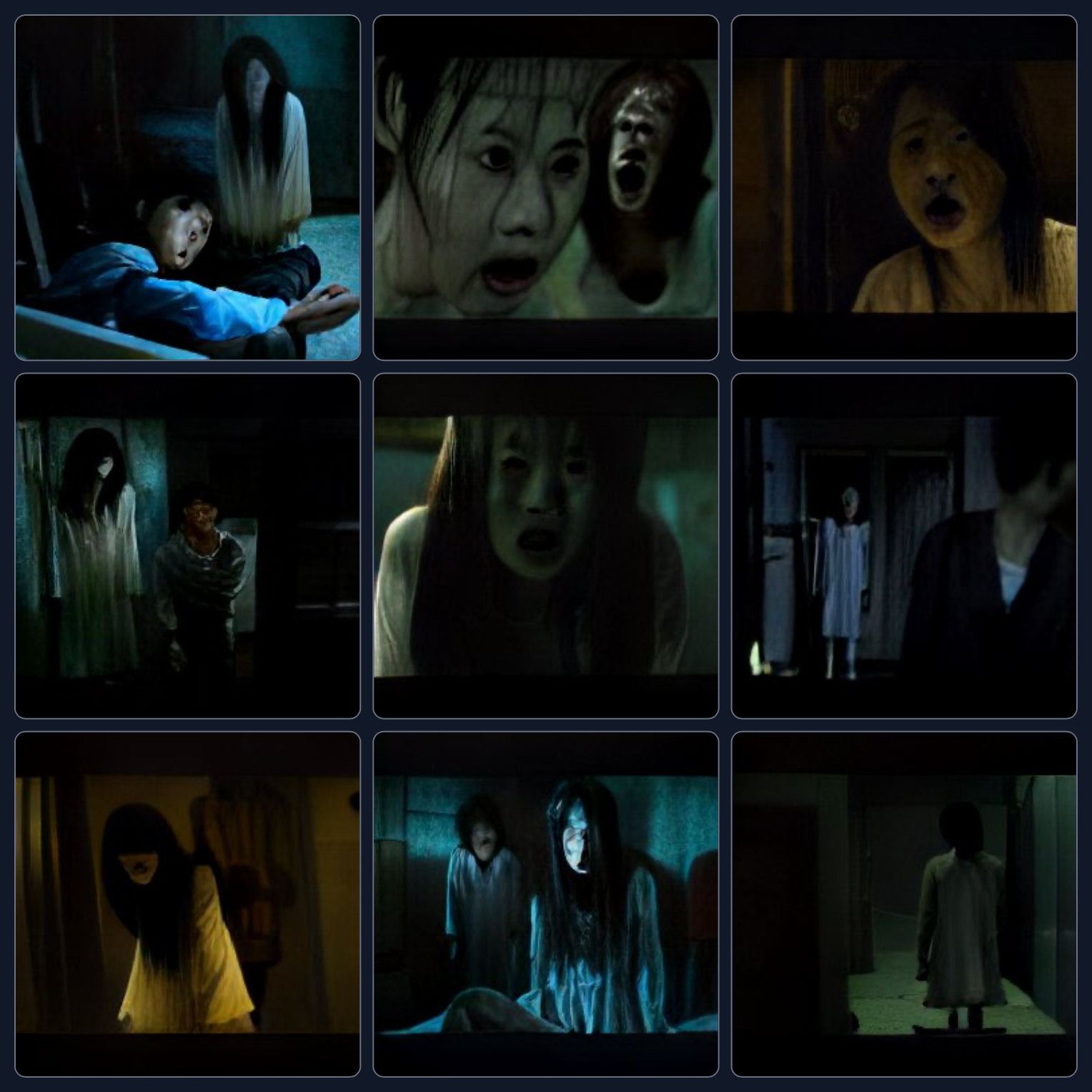

All right. I’ve also been watching some Chinese horror, so let’s try: Scene from a Chinese horror film:

Interesting! It’s mostly similar long-haired ghost girls, but with a vividly different colour palette. The delicate shoji walls have been replaced by brutalist concrete walls. There are also several apparent victims, lying on the floor in shrouds. And some interestingly creepy pictures on some of the walls.

Next up: Scene from a Korean horror film:

More long-haired ghost girls, but with a much greater emphasis on the faces and their blood-curdling expressions. We also have a few boys or young men who might be victims, or perhaps relatives of the ghost girl. The colour palette is a bit more blue/yellow and less green than the Chinese examples.

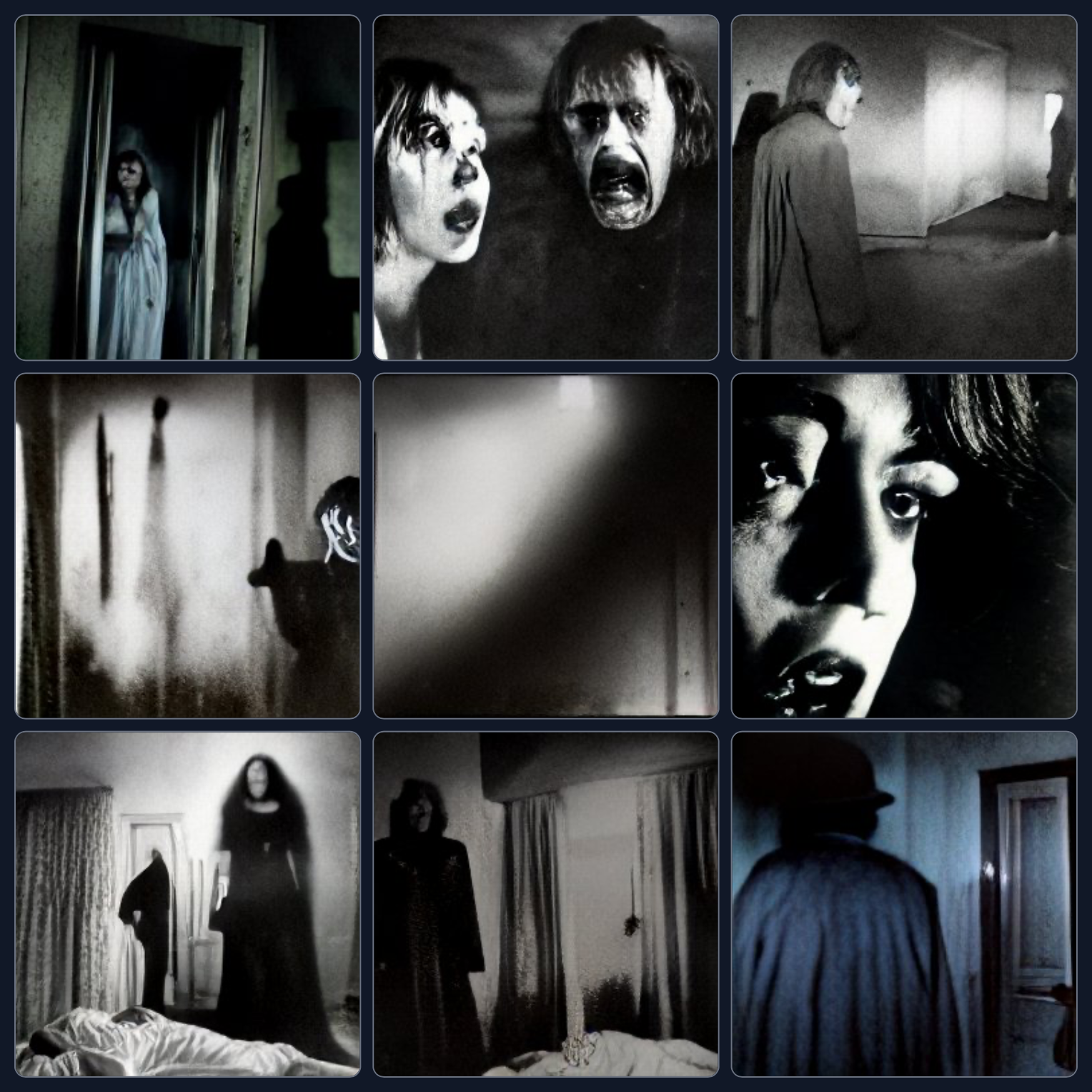

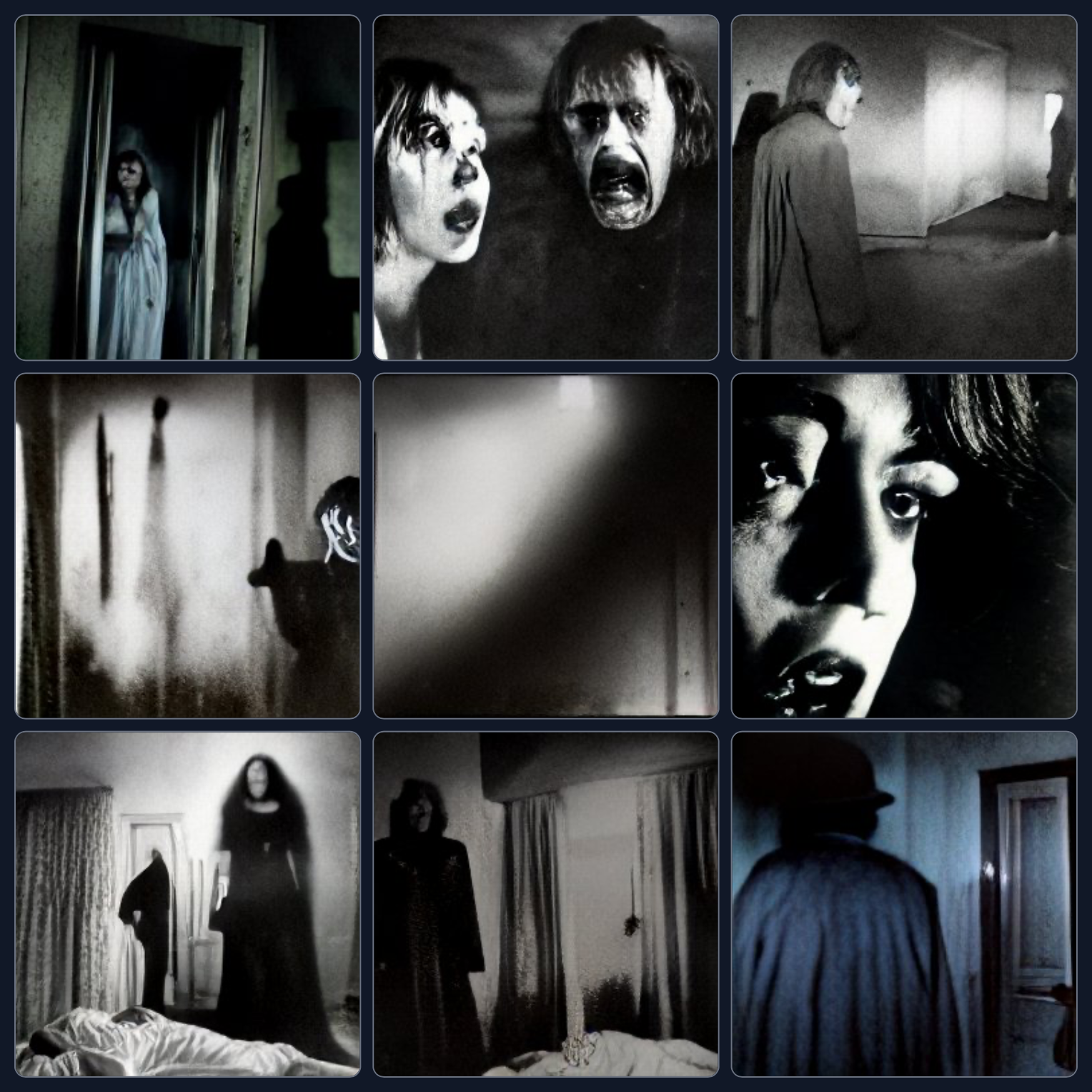

Okay, let’s try moving away from Asia, to Europe, beginning with: Scene from a French horror film:

Now our walls have curtains and doors. We’ve gone back to a mostly black and white palette. And the long-haired ghost girl is replaced by a range of spooky figures with recent haircuts, or horrified victims – particularly that anguished looking close-up of the woman’s face at centre right. In the bottom left we have what might be a witch hovering by someone’s bedside, waiting to bestow a curse. Definitely a more European classic cinema vibe here.

I’ve also seen a couple of German films recently, which have been fairly modern and based around teenagers getting into spooky situations. Honestly they felt more like Scooby Doo than a serious horror film. So lets try: Scene from a German horror film:

Oooh. Getting some Max Schreck Nosferatu vibes here, although not too explicitly. The exterior farmhouse at top middle is interesting – the first identifiably exterior scene generated so far. Good choice though because, as we all know, farmhouses are 90% more spooky than most other buildings. Definitely more of a vampire feel than ghosts here. And a couple of frames of Nazis, which I suppose is fair enough for the horror genre.

Now let’s try some English-speaking origins. We’ll start with: Scene from a British horror film:

Interesting. I’m not quite sure what to make of this. There seems to be a few people in masks, another creepy outdoor farmhouse, and in the bottom left what looks like a shadowy mob. Intriguing candlelight and shadows.

Contrast with: Scene from an American horror film:

There are definitely a lot more interior rooms here, with doors. I guess American horror hinges a lot more on people lurking through doorways.

And finally: Scene from an Australian horror film:

I’m not sure that anything here particularly implies Australia. It just seems to be some more semi-generic ghosty building stuff. I don’t know what that claw-like shadow is in the upper left panel, but it’s nice and spooky.

New content today: